AI Model Risk Management Market Overview

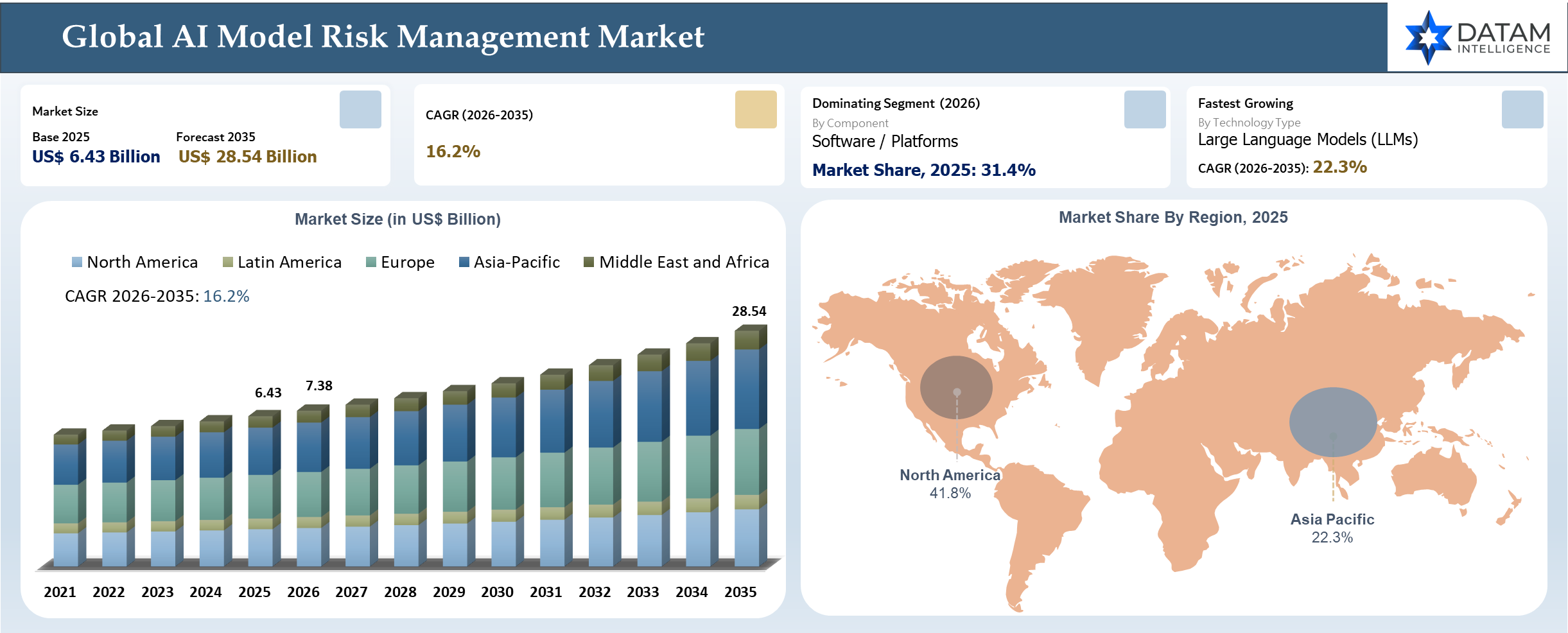

The global AI Model Risk Management market reached US$ 6.43 billion in 2025 and is expected to reach US$ 28.54 billion by 2035, growing with a CAGR of 16.2% during the forecast period 2026-2035. Enterprise adoption of artificial intelligence, generative AI, and large language models in applications such as fraud detection, credit underwriting, compliance management, customer interaction, and process automation has been fueling growing demands for advanced AI governance and model risk management solutions. Growing anxieties about issues such as model explainability, hallucinations, algorithmic bias, security, data privacy, and dependencies on third-party AI solutions have resulted in enterprises investing heavily in technologies like model validation, AI observability, fairness evaluation, automated compliance reporting, and continuous monitoring.

AI Model Risk Management Industry Trends and Strategic Insights

- The quick adoption of generative AI technologies, large language models, and agentic AI technologies is revolutionizing the way organizations have been dealing with their traditional approaches to model governance, requiring them to incorporate a continuous observability approach as well as runtime monitoring, adversarial testing, and validation capabilities.

- Evolution of global regulations regarding AI governance, including the EU AI Act, updates to SR 11-7, NIST AI RMF, and industry-specific regulations, is pushing organizations toward more centralized and auditable architectures for AI risk management centered around the themes of explainability, traceability, fairness, and accountability.

- Financial services firms continue to be the leading users of AI-based risk management solutions because of growing applications of AI in fraud detection, underwriting, anti-money laundering, credit risk assessment, and other compliance processes. Growing operational risks related to financial decisions supported by AI technology have spurred the adoption of model validation and governance solutions.

Market Scope

| Metrics | Details | |

| 2025 Market Size | US$ 6.43 Billion | |

| 2035 Projected Market Size | US$ 28.54 Billion | |

| CAGR (2026-2035) | 16.2% | |

| Largest Market | North America | |

| Fastest Growing Market | Asia-Pacific | |

| By Component | Software / Platforms and Services | |

| By Deployment Mode | On-Premise and Cloud-Based | |

| By Organization Size | Large Enterprises and Small & Medium Enterprises (SMEs) | |

| By Risk Type | Model Performance, Operational, Compliance & Regulatory, Data Privacy, Cybersecurity & Adversarial, Bias & Ethical, Reputational, Strategic, Third-Party & Vendor and Others | |

| By Technology Type | Traditional Machine Learning Models, Deep Learning Models, Generative AI Models, Large Language Models (LLMs), Computer Vision Models, Natural Language Processing (NLP) Models, Reinforcement Learning Models | |

| By Industry Vertical | Banking, Financial Services & Insurance (BFSI), Healthcare & Life Sciences, IT & Telecommunications, Retail & E-commerce, Manufacturing, Government & Public Sector, Automotive & Mobility, Energy & Utilities, Aerospace & Defense, Media & Entertainment, Transportation & Logistics, Education and Others | |

| By Application | Fraud Detection & Prevention, Credit Risk Assessment, Regulatory Compliance Monitoring, Algorithmic Trading Supervision, Customer Analytics & Personalization Oversight, Predictive Maintenance Risk Monitoring, Healthcare Diagnosis Validation, Autonomous Systems Risk Assessment, Cybersecurity Threat Intelligence, Supply Chain Decision Monitoring, HR & Recruitment AI Governance and Insurance Underwriting Validation | |

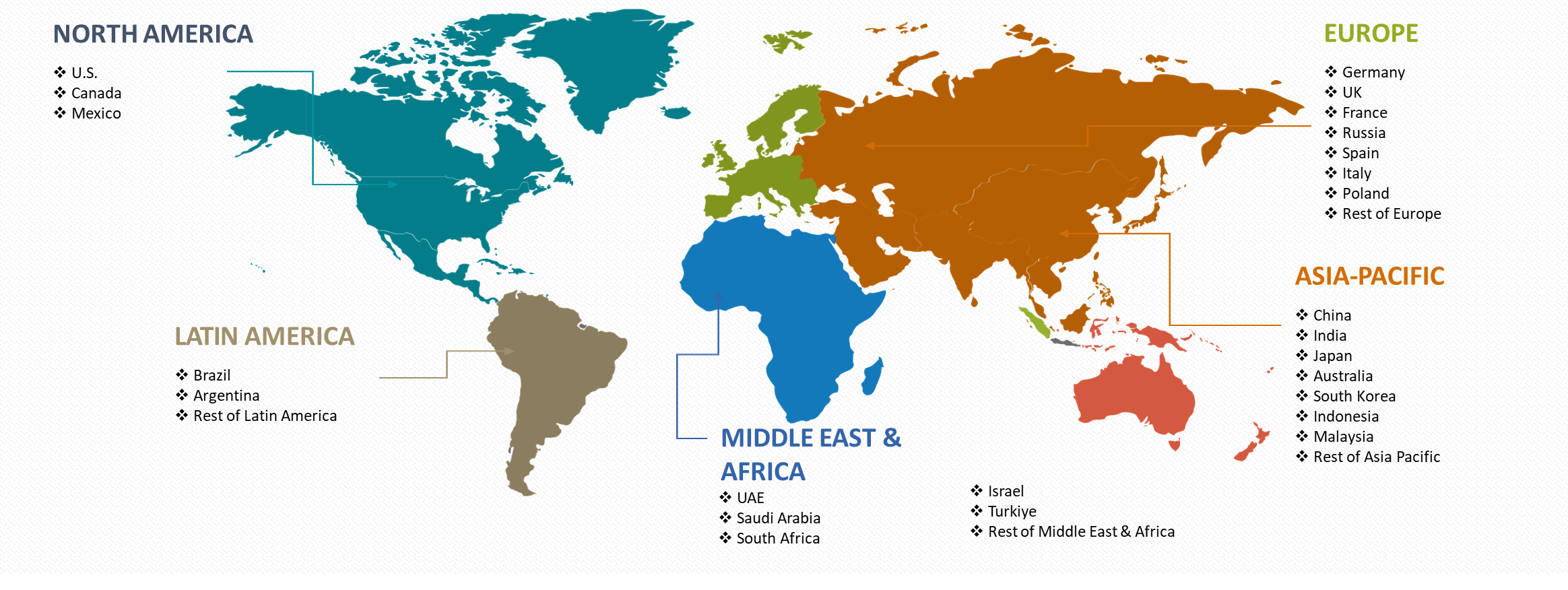

| By Region | North America | U.S., Canada, Mexico |

| Europe | Germany, UK, France, Spain, Italy, Poland | |

| Asia-Pacific | China, India, Japan, Australia, South Korea, Indonesia, Malaysia | |

| Latin America | Brazil, Argentina | |

| Middle East and Africa | UAE, Saudi Arabia, South Africa, Israel, Turkiye | |

| Report Insights Covered | Competitive Landscape Analysis, Company Profile Analysis, Market Size, Share, Growth | |

Disruption Analysis

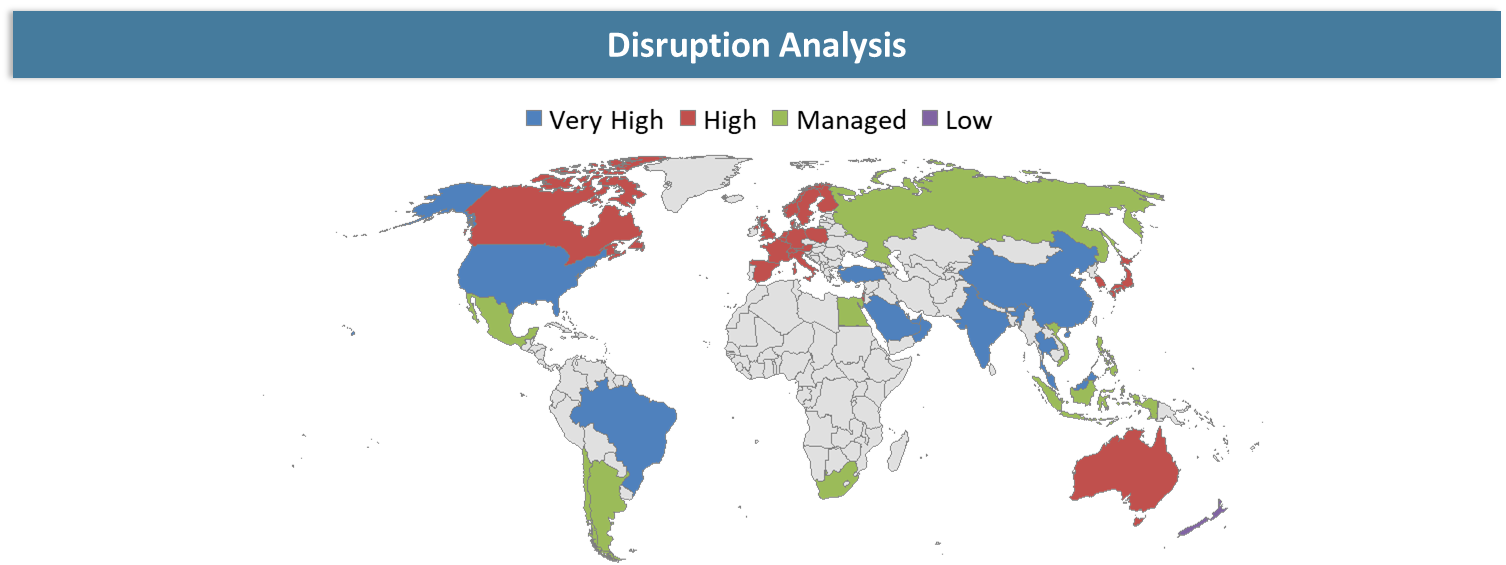

Enterprise Shift Toward Agentic AI Intensifies Pressure on Conventional Model Risk Controls

The shift of enterprise computing from traditional machine learning to generative AI and agentic AI platforms is inherently revolutionizing the AI model risk management environment. Organizations are progressively incorporating their AI capabilities within financial analysis, customer engagement, underwriting, fraud prevention, and operational decision-making processes, exposing themselves to the potential for volatile results, non-deterministic actions, decision-making risks in real time, and reliance on third-party foundation models. The trend is placing substantial demand on firms to improve their governance, operations, and AI accountability.

Increased reliance on self-learning and continuously advancing AI technology is generating a greater need for governance structures that enable dynamic validation, monitoring at run-time, policy enforcement, and compliance-friendly AI operations. Increasing concerns related to the gaps in AI control, uncontrolled behavior of AI models, regulatory risk, and cybersecurity risk associated with AI are prompting companies to upgrade their traditional governance structures and adopt centralized approaches to AI risk management.

BCG Matrix: Company Evaluation

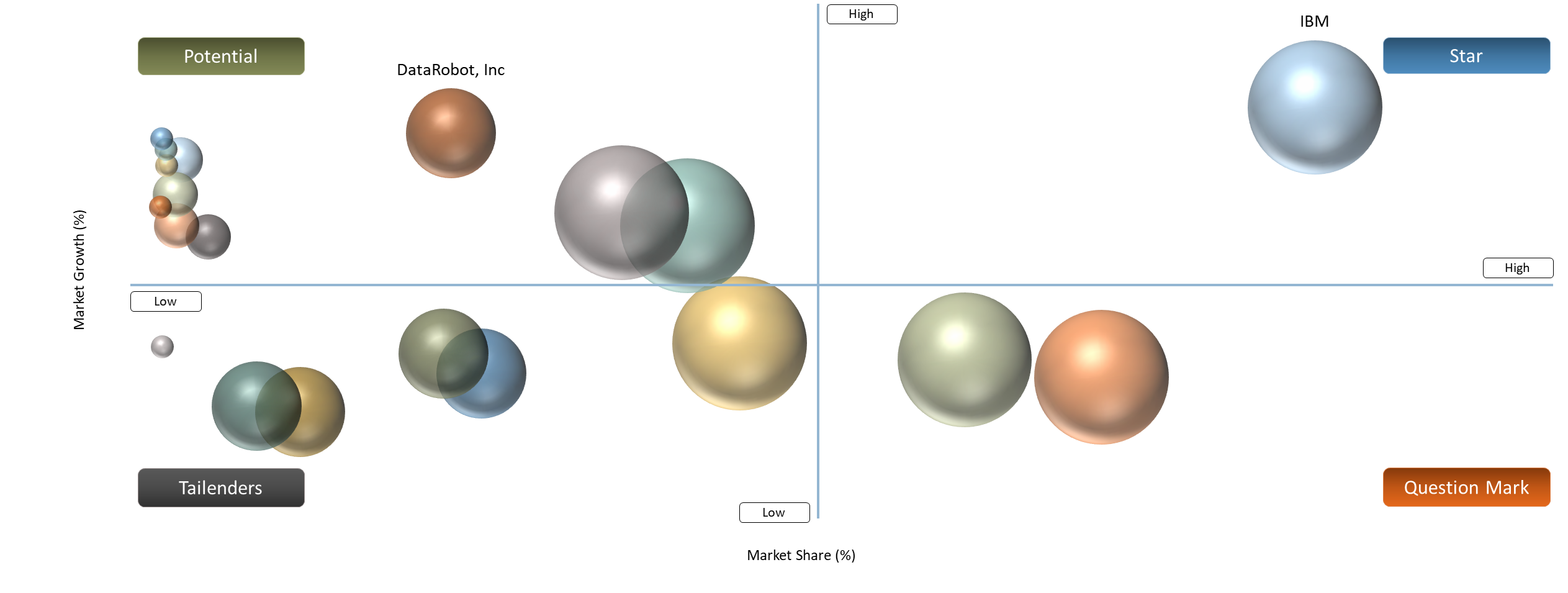

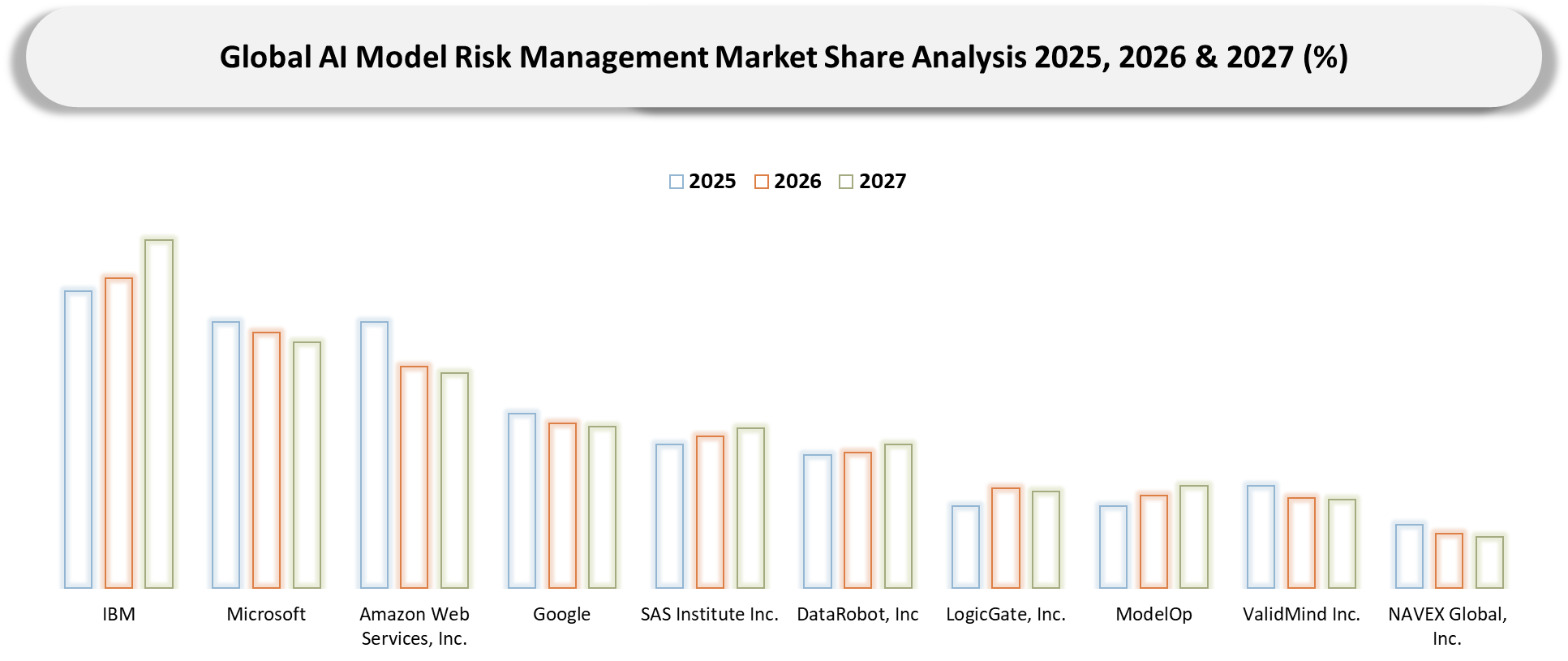

AI model risk management market is known to be competitive in terms of cloud hyperscalers, enterprise AI software vendors, governance providers, and regulatory technology vendors. IBM, Microsoft, AWS, Google, and SAS are identified as Star Players based on the presence of vast enterprise client list, robust AI governance solution offerings, comprehensive cloud ecosystem, and significant investment in explainable AI solutions, automation, and AI model lifecycle monitoring platform. DataRobot, H2O.ai, C3.ai, and Moody’s Analytics are considered to be Potential Players driven by growing AI governance product portfolio, analytics prowess, and penetration into BFSI and healthcare verticals.

Players such as ModelOp, ValidMind, Credo AI, and Yields are gaining importance as Question Mark Players by improving their market positioning via niche offerings like automation of AI governance, model validation, audit preparedness, and compliance management. LogicGate and NAVEX Global have been recognized as tailenders in the field of AI model risk management because of their broader scope of operations in governance and compliance.

Market Dynamics

Driver Impact Analysis

| Driver | Market Growth Impact (%) | Demand Concentration | Impacted Use Case | Strategic Impact |

| Expansion of Generative AI and Large Language Model Deployments | 3.8% | Strong demand across North America, Europe, and Asia-Pacific driven by BFSI, healthcare, and enterprise technology sectors | AI copilots, autonomous agents, enterprise decision intelligence, customer service automation | Accelerates enterprise investments in AI governance, observability, and lifecycle risk management frameworks |

| Increasing Regulatory Focus on Responsible AI Governance | 3.3% | Concentrated in highly regulated markets including the US, European Union, UK, and Singapore | Regulatory compliance monitoring, model validation, auditability, AI transparency management | Strengthens adoption of centralized AI governance platforms aligned with evolving global compliance mandates |

| Rising Financial and Reputational Risks Associated with AI Failures | 2.9% | High demand across BFSI, insurance, healthcare, and government sectors globally | Fraud detection, underwriting, predictive analytics, AI-driven risk assessment | Drives continuous monitoring, explainability, and adversarial testing investments to reduce enterprise risk exposure |

| Increasing Adoption of AI in Financial Services and Banking Operations | 2.7% | Primarily concentrated in North America, Europe, and major Asia-Pacific financial hubs | Credit scoring, anti-money laundering, fraud analytics, algorithmic trading supervision | Expands demand for model governance, audit-ready AI infrastructure, and operational risk management solutions |

Rapid Expansion of Generative AI and Large Language Model Deployments

Rapidly scaling the use of generative artificial intelligence and large language models in industries like finance, health care, insurance, retail, and government is driving a major increase in demand for model risk management for AI. Organizations are embedding artificial intelligence copilots, intelligent automation platforms, and AI-powered decision-making engines in key business processes to boost efficiency, customer interactions, and decision-making speed. The fast-growing deployment of AI in organizations is making model risk management increasingly complex.

Growing concerns about hallucinations, biases, prompt manipulation, exposure of personal data, attacks on models by hackers, and dependence on third-party AI solutions are prompting organizations to improve their governance and oversight mechanisms. Many enterprises are beginning to make more efforts towards validating AI models, monitoring their lifecycle, assessing their fairness, generating compliance reports, and ensuring that models are explainable. Growing regulatory attention around the EU AI Act, SR 11-7, GDPR, and other AI governance regulations is further spurring adoption of AI risk management technologies in regulated industries.

Restraint Impact Analysis

| Restraint | Drag on Market Growth (%) | Primary Impact Area | Impacted Use Case | Strategic Impact |

| Fragmented Global AI Governance Regulations | 3.1% | Cross-border AI compliance management and enterprise governance standardization | Multi-region AI deployments, regulatory reporting, global AI operations | Increases compliance complexity and slows enterprise-wide AI governance implementation across multinational organizations |

| Shortage of Skilled AI Governance and Risk Management Professionals | 2.8% | AI model validation, explainability assessment, and lifecycle governance operations | AI auditability, adversarial testing, fairness assessment, compliance monitoring | Limits enterprise capability to operationalize scalable AI governance and increases dependence on external consulting support |

| Limited Explainability and Transparency in Generative AI Models | 2.6% | Enterprise AI accountability and model interpretability frameworks | Generative AI systems, large language models, autonomous AI decision engines | Restricts adoption across highly regulated sectors requiring transparent and auditable AI-driven decisions |

Lack of Standardized Global AI Governance Frameworks

Fragmented regulations for global AI governance continue to pose a significant barrier to the development of the AI model risk management industry, especially with organizations having to deal with growing difficulties in ensuring compliance with various regional guidelines while using AI models. Organizations that use AI models within different regions have to comply with a number of guidelines, such as the European Union’s AI Act, SR 11-7, the General Data Protection Regulation (GDPR), and NIST AI RMF, all of which require varying conditions regarding the explainability, transparency, supervision, and classification of risks.

Proliferation of generative AI and agentic AI tools continues to highlight constraints within governance structures that have been set up with traditional machine learning algorithms in mind. Enterprises are increasingly struggling with the challenges of controlling third-party AI systems, auditing models, assessing fairness, conducting adversarial tests, and continuous monitoring within dynamic AI environments. The lack of consistency in AI governance practices and divergent regulatory standards continues to cause confusion in the implementation of enterprise-level AI risk management practices.

Segmentation Analysis

The global AI Model Risk Management market is segmented based on Component, Deployment Mode, Organization Size, Risk Type, Technology Type, Industry Vertical, Application and region.

AI-Driven Fraud Detection Systems Fuel Enterprise Adoption of Advanced Model Risk Management Platforms

Fraud detection and prevention is emerging as one of the important use cases in the AI model risk management industry owing to an increase in usage of artificial intelligence in financial crime prevention processes by banks, payment service providers, insurance firms, and online e-commerce organizations. The use of machine learning, predictive analytics, and generative AI for transaction monitoring, identity verification, anti-money laundering, and behavioral risk assessment is creating a need for governance frameworks capable of providing model explainability, transparency, accuracy, and compliance. The rise in synthetic identity theft, AI-driven cybercrime, and deepfake financial attacks is also boosting interest in model validation and monitoring solutions.

Growing regulatory scrutiny over algorithmic accountability, customer data privacy, bias prevention, and responsible AI governance practices is driving organizations to invest in infrastructure that facilitates robust AI model risk management. Adversarial testing, drift detection, auditability, automated compliance reporting, and lifecycle management functionalities are becoming more common features of organizations’ fraud analysis frameworks. The proliferation of generative AI applications in transaction intelligence and financial risk assessment processes is further driving organizations to adopt governance frameworks that are capable of addressing cybersecurity risks, third-party AI reliance, and regulatory compliance within heavily regulated financial systems.

Geographical Penetration

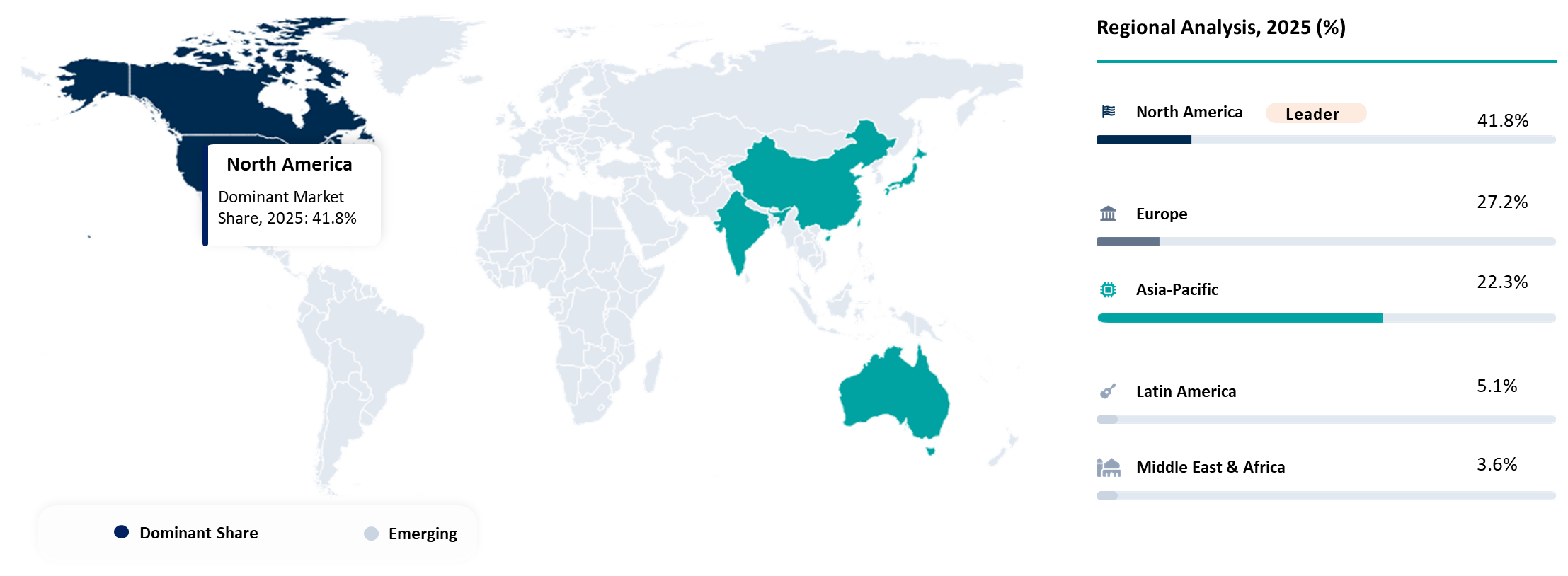

North America Strengthens Leadership in AI Model Risk Management Through Regulatory Expansion and Enterprise AI Governance Adoption

North America leads in AI model risk management due to the rapid deployment of enterprise AI applications, the availability of advanced cloud architecture, regulatory oversight, and increased adoption of generative AI in financial institutions, healthcare organizations, retail companies, government agencies, and insurers. The increasing adoption of frameworks such as NIST AI RMF, SR 11-7, ISO/IEC 42001, and federal government recommendations on AI governance will spur investments in technologies that provide capabilities of model explainability, model validation, auditing, automation of compliance processes, and ongoing lifecycle management. Increased usage of large language models, AI co-pilots, and autonomous AI will drive further demand for risk governance platforms.

Hyperscale cloud companies, AI solution providers, and sophisticated ModelOps platforms continue to play a significant role in maintaining regional dominance. Organizations within North America have begun to emphasize the need for AI observability, adversarial tests, fairness evaluation, and responsible AI governance frameworks in order to facilitate responsible AI deployments at scale. An increase in AI governance, cyber security, and enterprise risk management convergence is leading to an increased interest in centralized AI risk management frameworks.

United States Accelerates AI Governance Investments Amid Rising Generative AI Adoption and Federal Oversight

US constitutes the largest contributor in the North American AI model risk management market due to high adoption rates of AI, generative AI, and advanced analytics in financial services, healthcare, defense, retail, and technology industries. Growth in regulatory activities such as NIST AI RMF, SR 11-7, federal frameworks for AI, and industry-specific AI regulations has created robust investments in AI governance, model validations, audits, explainability, and monitoring. It has been growing adoption of AI governance platforms among financial institutions and companies to mitigate risks related to hallucinations, biases, data leaks, attacks on algorithms, and third-party AI solutions.

With rapid expansion of cloud-native AI ecosystems and the drive for organization-wide AI transformation, the need for scalable risk management systems for AI models remains high within the US market. With AI observability, lifecycle management, automation for compliance, and privacy-preserving technology, enterprises are using enterprise risk management processes in order to ensure their secure and responsible use of large language models and autonomous AI. The growing regulatory pressure on AI accountability and operational transparency is also driving adoption.

Canada Expands Responsible AI Governance Frameworks to Support Secure Enterprise AI Adoption

Canada represents a promising market for AI model risk management as organizations become increasingly interested in ethical AI governance, responsible AI implementation, and digital transformation strategies within their enterprises. The increasing compatibility of Canada with international AI governance standards such as ISO/IEC 42001, NIST AI RMF, and other global AI compliance standards is making organizations invest more in model transparency, explainability, validation, and life cycle governance. The increase in national AI innovation initiatives, cloud AI computing, and technology partnerships between the public and private sectors is aiding this process.

The adoption of more advanced AI generation tools, analytics, and intelligent automation is generating more interest in AI observability, AI fairness testing, cyber security monitoring, and automatic compliance reporting solutions in Canadian companies. The financial services sector and government agencies are increasingly focused on implementing governance frameworks that can effectively enable privacy, data sovereignty, AI accountability, and third-party AI management in complex AI environments. Trustworthy AI practices are anticipated to fuel market growth in Canada in the coming years.

Competitive Landscape

- Competitive growth in the AI model risk management market has been accelerating as enterprises increase their reliance on generative AI, regulatory scrutiny intensifies, and there is an increased need for responsible AI governance. Leading technology firms like IBM, Microsoft, AWS, Google, and SAS have a significant presence in the market through AI governance platforms, cloud-based architecture, explainable AI, and compliance management tools at scale. Competition in the market is becoming more focused on automated model validation, governance of the model life cycle, real-time monitoring, auditability, bias identification, and operational transparency.

- The market is seeing fast-paced growth from providers that focus on AI Governance and Model Monitoring, such as ModelOp, ValidMind, Credo AI, Yields, DataRobot, and H2O.ai. Providers are differentiating themselves through their unique offerings of AI regulation compliance automation, AI observability, governance orchestration, and model risk lifecycle management for highly-regulated sectors like BFSI, healthcare, and insurance. Collaborations, cloud integration, and generative AI governance will be critical in competing as companies continue to favor policy-driven AI deployments.

- Key players include IBM, Microsoft, Amazon Web Services, Inc., Google, SAS Institute Inc., DataRobot, Inc, LogicGate, Inc., ModelOp, ValidMind Inc., NAVEX Global, Inc., H2O.ai, Inc., C3.ai, Inc., Credo.AI Corp., Yields and Moody’s Analytics, Inc.

Key Developments

- Mar 2026 - The Monetary Authority of Singapore developed the MindForge AI Risk Management Toolkit for the financial services industry, partnering with 24 stakeholders from the sector. It aims to provide practical guidelines on the governance of AI, AI lifecycle management, risk assessment, and AI oversight for traditional AI, generative AI, and agentic AI. It is in response to increasing interest in standardizing the risk management of AI in the financial services sector.

- February 2026 - FIS has unveiled the Insurance Risk Suite AI Assistant, an AI-enabled tool that aims to boost risk model management and actuarial processes in the insurance industry. The application offers continuous assistance in developing, maintaining, and analyzing risks, allowing companies to increase efficiency, quicken their responses to risks, and develop robust risk model governance skills.

- November 2025 - Tiger Analytics and Databricks have introduced a scalable Model Risk and AI Governance solution for BFSI organizations to improve the governance, compliance, auditability, and lifecycle management of AI models.

- July 2025- Experian launched Experian Assistant for Model Risk Management, which is an AI-driven governance tool that can be integrated with the Ascend Platform using the ValidMind technology. The solution will help organizations in automating the model documentation, validations, monitoring, and compliance process throughout the AI models' life cycle. Launch will enhance organizations' abilities to handle audits, meet regulations, and manage AI governance, enabling quick deployment and enhanced visibility when using generative AI technology.

Why Choose DataM?

- Technological Innovations: Explores the latest advancements in AI Model Risk Management including generative AI governance, explainable AI (XAI), AI observability, automated model validation, adversarial testing, lifecycle monitoring, and ModelOps integration that are improving enterprise AI transparency, compliance readiness, and operational reliability across regulated industries.

- Product Performance & Market Positioning: Evaluates how leading AI governance platforms, cloud hyperscalers, and model risk management providers perform across banking, healthcare, insurance, retail, and government sectors. The analysis compares capabilities in model monitoring, explainability, auditability, fairness assessment, compliance automation, cybersecurity protection, and real-time AI risk detection, highlighting competitive differentiation strategies across global markets.

- Real-World Evidence: Highlights practical use cases of AI Model Risk Management across fraud detection, credit risk assessment, anti-money laundering, customer analytics, underwriting, compliance management, and operational automation environments. It demonstrates measurable improvements in governance efficiency, risk reduction, model transparency, regulatory alignment, and AI-driven decision accountability.

- Market Updates & Industry Changes: Tracks major industry developments including expansion of generative AI regulations, implementation of the EU AI Act, adoption of NIST AI RMF frameworks, growth of enterprise AI governance investments, emergence of agentic AI oversight requirements, and rising adoption of responsible AI frameworks across North America, Europe, and Asia-Pacific.

- Competitive Strategies: Analyzes how leading market participants are expanding their footprint through AI governance platform innovation, cloud-native deployment models, strategic partnerships, generative AI risk management capabilities, AI observability enhancements, and integration of compliance automation and lifecycle governance technologies.

- Pricing & Market Access: Explains pricing structures across enterprise AI governance platforms, subscription-based AI risk management solutions, cloud-native deployment models, and managed AI compliance services. It also reviews enterprise adoption patterns, licensing models, consulting support, and regional expansion strategies supporting market penetration.

Market Entry & Expansion: Identifies growth opportunities linked to rising adoption of large language models, generative AI governance, third-party AI risk oversight, and enterprise-wide responsible AI initiatives. It also outlines strategies for vendors to expand globally through cloud ecosystem partnerships, regulatory alignment capabilities, scalable governance architectures, and industry-specific AI compliance solutions.

Target Audience 2026

- Financial Institutions & Banking Organizations: Banks, insurance providers, credit institutions, and capital market firms deploying AI model risk management solutions for fraud detection, credit underwriting, regulatory compliance, anti-money laundering, and operational risk management applications.

- Enterprise AI & Technology Providers: Cloud hyperscalers, AI platform providers, enterprise software vendors, and generative AI developers integrating AI governance, explainability, observability, and lifecycle monitoring capabilities into enterprise AI ecosystems.

- Healthcare & Life Sciences Organizations: Healthcare providers, pharmaceutical companies, and digital health platforms utilizing AI governance and model validation solutions to improve transparency, regulatory compliance, patient data protection, and reliability of AI-driven diagnostics and clinical decision systems.

- AI Governance, Compliance & Risk Management Teams: Enterprise risk management departments, compliance officers, ModelOps teams, cybersecurity professionals, and AI governance specialists responsible for model validation, auditability, fairness assessment, and continuous AI monitoring across regulated environments.

- Government & Regulatory Authorities: Government agencies, financial regulators, data protection authorities, and public sector organizations establishing AI governance frameworks, compliance standards, cybersecurity policies, and responsible AI deployment strategies.

- Investors & Private Equity Firms: Investment firms, venture capital companies, and institutional investors monitoring growth opportunities linked to generative AI governance, AI compliance infrastructure, enterprise AI transformation, and responsible AI technology adoption.

- Consulting Firms & System Integrators: Technology consulting companies, digital transformation providers, and system integration firms supporting enterprises in AI governance implementation, compliance modernization, lifecycle management, and enterprise-scale AI risk management deployment.